Here’s an example of how easy it is to see things that don’t exist. It’s from a real piece of research (mine). As way of background, I’ve been doing some work with computer models of neurons in the cortex (NB this isn’t artificial neural networks, which were all the rage in the 1980/90s). Broadly speaking, I’ve been looking at the cross-over between two different models – (a) a very detailed model of neurons including explicity modelling of ionic currents across membranes that lead to action potentials (a neuron ‘firing’), and (b) a more statistical approach in which we only consider firing rates, rather than modelling every firing event.

Here’s an example of how easy it is to see things that don’t exist. It’s from a real piece of research (mine). As way of background, I’ve been doing some work with computer models of neurons in the cortex (NB this isn’t artificial neural networks, which were all the rage in the 1980/90s). Broadly speaking, I’ve been looking at the cross-over between two different models – (a) a very detailed model of neurons including explicity modelling of ionic currents across membranes that lead to action potentials (a neuron ‘firing’), and (b) a more statistical approach in which we only consider firing rates, rather than modelling every firing event.

What I’ve been trying to do is to take output from the simpler firing rate model (b) and reconstruct a pattern of firing events that is consistent with it – i.e. reverse engineer the detail. Then see how this compares with model (a), when the detail was in there to start with.

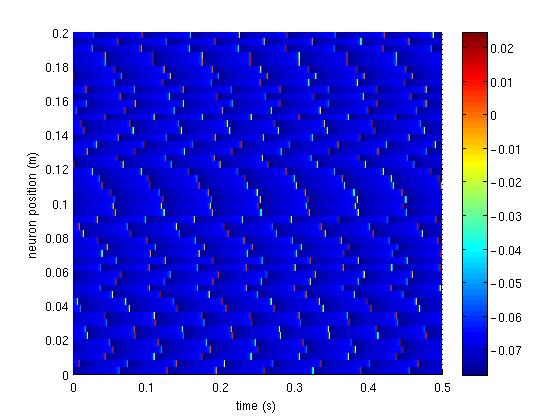

What I was hoping to see from the computer output was sequences of firing events that were strongly correlated in space. I’ll show you a picture of what I got (which is just one small piece of a much bigger simulation). Time is along the x-axis (horizontal), position (i.e. different neurons) along the y-axis (vertical), and colour gives the neuron voltage. A neuron firing is therefore shown as a small, bright, vertical bar.

Now, at first glance this looks promising. There’s a chunk of neurons in the middle that appear to be firing pretty much together. And at the bottom, neurons appear to be ‘pairing’, so a neuron fires with its neighbour. So, having plotted this, I was quite happy.

But then came the let-down. I actually analyzed the full output (much longer than the 0.5 seconds shown here, and more neurons too) in a systematic, mathematical manner, independent of my gut feeling. The result? Any correlations I’m seeing are purely imagined (or, if they exist, are very small indeed). I am ‘seeing things’ in the picture. (NB Yes, there certainly are correlations in time – each neuron fires at a fairly constant rate, but there aren’t any correlations between neighbouring neurons, which is what I wanted to see.)

It’s often the case that we can see what we want to see in data, when it’s just presented to us in raw form. We’re great at ‘seeing’ patterns that just don’t exist. Analyze it systematically, and often these disappear. Frustrating in this case – I was hoping that they’d be real, but that’s not the result I get.